Multi-View Data Augmentation to Improve Wound Segmentation on 3D Surface Model by Deep Learning

Abstract

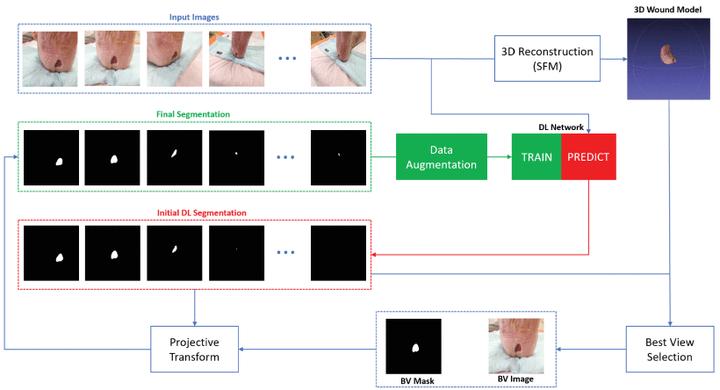

Wound area segmentation really progressed with the emergence of deep learning, due to its robustness in uncontrolled lighting and no need to design hand-crafted features but two limits have still to be overcome. Firstly, its performance relies on the size and quality of the training dataset in the medical field, where data annotation is costly and time-consuming; secondly the accuracy of the segmentation depends highly on the camera distance and angle and moreover perspective effects prevent measuring real surfaces in single views. To address concurrently these two issues, we propose to apply multi-view modeling, an image sequence is acquired around the wound site and enables wound 3D reconstruction. Then, a segmentation step is run to extract roughly the wound from the background in each view and to select the best view with an original strategy. This view provides the most accurate segmentation and the real wound bed area even on non planar wounds. Finally, this segmentation is backprojected in each view to generate a complete set of well annotated real images to reinforce the learning step of the neural network. In our experiments, we compare several strategies to select the best view in the image sequence. The proposed method, tested on a dataset of 270 images, outperforms standard deep learning approach based on a single view, as recorded with DICE index and IoU score. These results attest to the robustness of our method and its improved accuracy in the wound segmentation task.